© Fraunhofer ISST

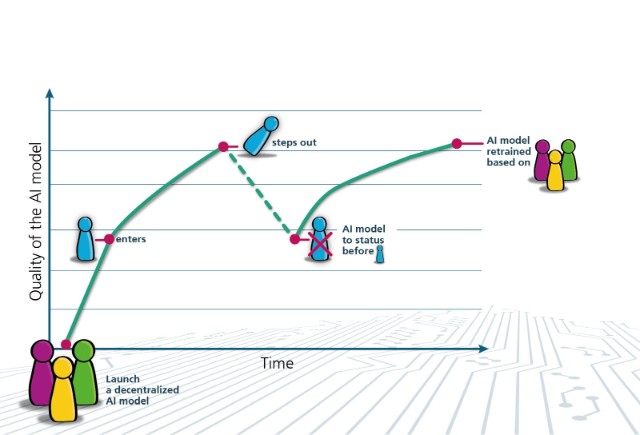

Using federated unlearning, decentralized AI models revert to the state they were in before a data provider joined when that provider leaves. From that point on, they are retrained.

The development of AI models in joint projects yields improved results because multiple companies contribute their data. The situation becomes critical, however, if a partner withdraws and requests the return of its data. With federated unlearning, Fraunhofer researchers and Fujitsu Research have developed a method for precisely removing data from decentralized AI models.

MORE